if(.) implies that you have more uniform data to pass to the program (you need some way to decide which features are enabled.). I have not actually seen this in practice but I've seen people claim it exists as an issue :-P adding even more instructions can make it worse. not often talked about but can be relevant if you are running a really large program where different subgroups might be operating in completely different places in the code regularly.

if(.) has a small instruction cache penalty, obviously, because your code has more instructions. also on archs that have scalar and vector registers (most of them by now), this is only relevant for the scalar part since the instructions will likely work on uniform values. after the result is used it is discareded and the register can be reused. but this is only relevant if the extra if is added on the already 'most register hungry' path, since registers are allocated statically so only the most complex path in your shader determines how many registers your gpu program needs in its entirety. to check a feature activation with if(a & b) where a and b are also individual features). if(.) needs more registers, also to perform the instruction, and potentially to store the result for longer if you reuse it (e.g. if you have a shader that would otherwise be a handful of instructions, but it's blasted to a couple of dozen by runtime-toggling of various features, you will notice a difference! if(.) has a small runtime cost for literally executing the comparison instruction.

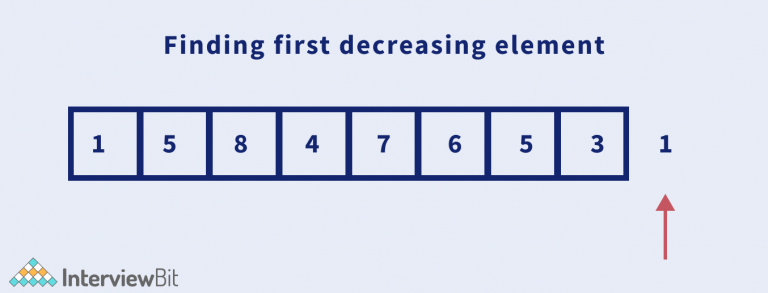

You just have to get an understanding of how an if(.) is different from a compile-time #if and where they end up costing you time or memory: With Nsight you test the different versions and observe how it leads to cache misses or pure perf hits in many ways. I've done a lot of very low-level optimizations of CUDA code using it and learned a lot about how the SMs and caches work due to that, and managed to answer a lot of questions that pop up all the time in the process, like when to put stuff in the constant cache, when to use local shared memory etc. On the other hand, if you have the "infrastructure" to do it, there is nothing wrong with having 10,000 of shaders I guess, they are small and the cost of switching between them should be minimal compared to the rendered triangles unless you have a bad engine doing bad sorting where you need a different shader for every tri you're rendering but that shouldn't happen.Īs you note, benchmarking is the best, Nvidia Nsight is a really amazing free tool that is well worth the time to learn. In particular, any warp-synchronous code (such as synchronization-free, intra-warp reductions) should be revisited to ensure compatibility with Volta and beyond." "Independent Thread Scheduling can lead to a rather different set of threads participating in the executed code than intended if the developer made assumptions about warp-synchronicity of previous hardware architectures. The feature on/off case of conditionals is a bit special in that it shouldn't cause a big hit on perf as partial warps won't have to execute serially for each different if-condition within the warp - but this changed somewhere along Nvidias development and I'm not sure anymore how exactly diverging if's within a warp get hit (or not): The replacement must be in place and use only constant extra memory.If your feature actually has to perform texture lookups and stuff having effects outside the SM core (even if it just means flushing some cache lines), it will have some cost to run it in a float-blend kind of situation compared to simply "shutting it off" with a boolean and if(). Given an array of integers nums, find the next permutation of nums.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed